2D/3D Bildaufnahme mit Datenfusion

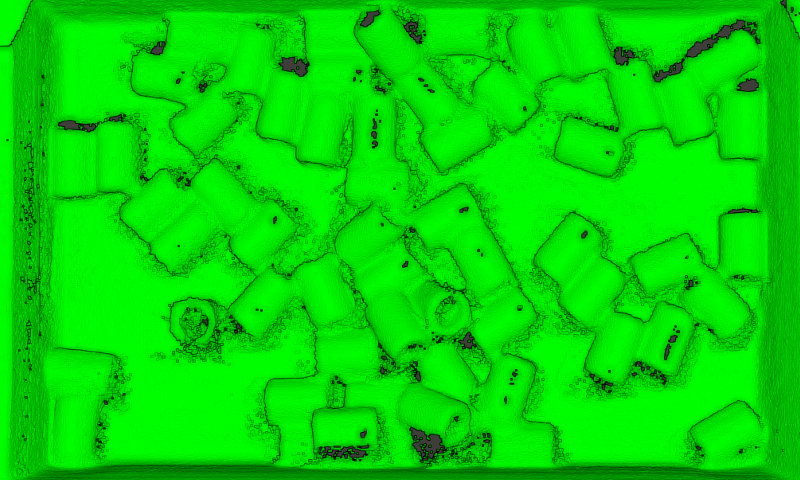

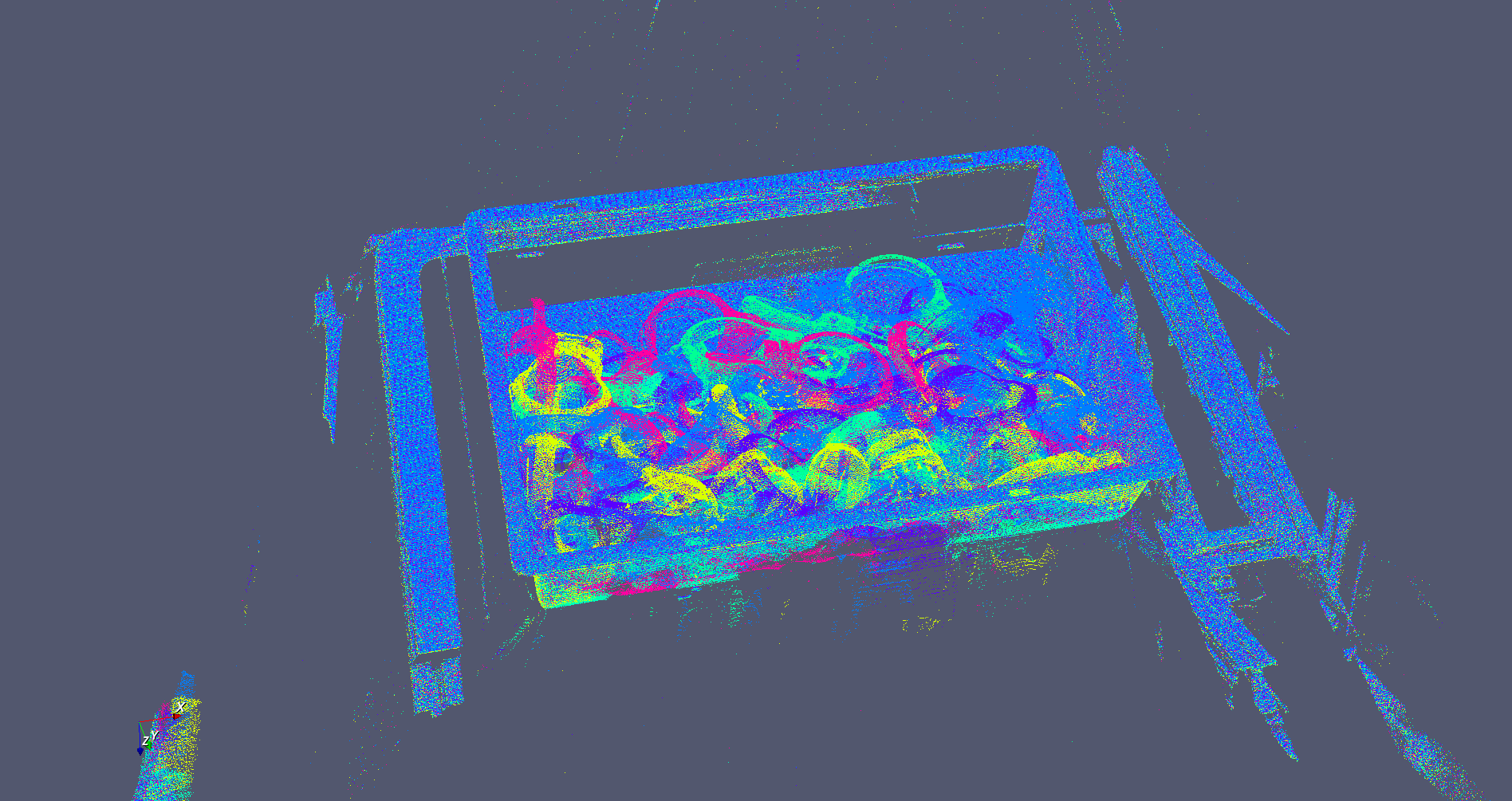

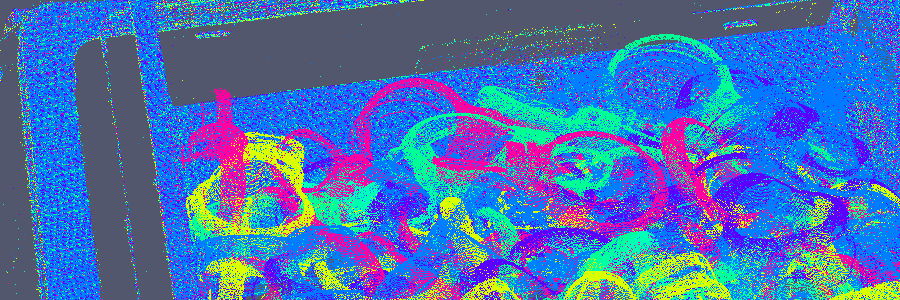

Ein auf dem Roboterarm angebrachter kombinierter 2D /3D Sensor (Intel Realsense) wird zur Aufnahme von 2D Farbbildern und eines fusionierten Tiefenbildes der gesamten Kiste genutzt. Die 3D Bildaufnahme erfolgt vom bewegten Arm aus, somit ist es möglich die Aufnahmen aus mehreren verschiedenen Blickwinkeln und Aufnahmepositionen zu einer umfassenden 3D-Aufnahme der Objekte zu fusionieren.

Es kann durch diese Art der Montage auch auf einen externen fest installierten Sensor verzichtet werden, der den Arm in seinen Bewegungen einschränken könnte.

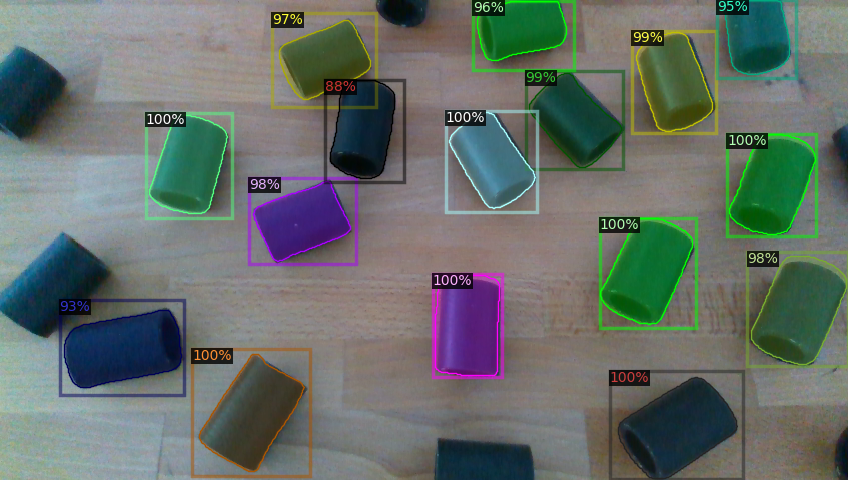

Objekterkennung und Lokalisierung mit Unterstützung durch KI

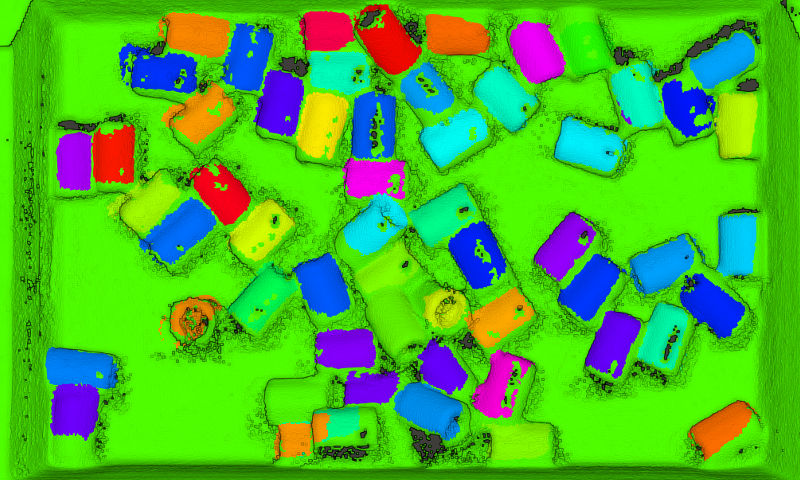

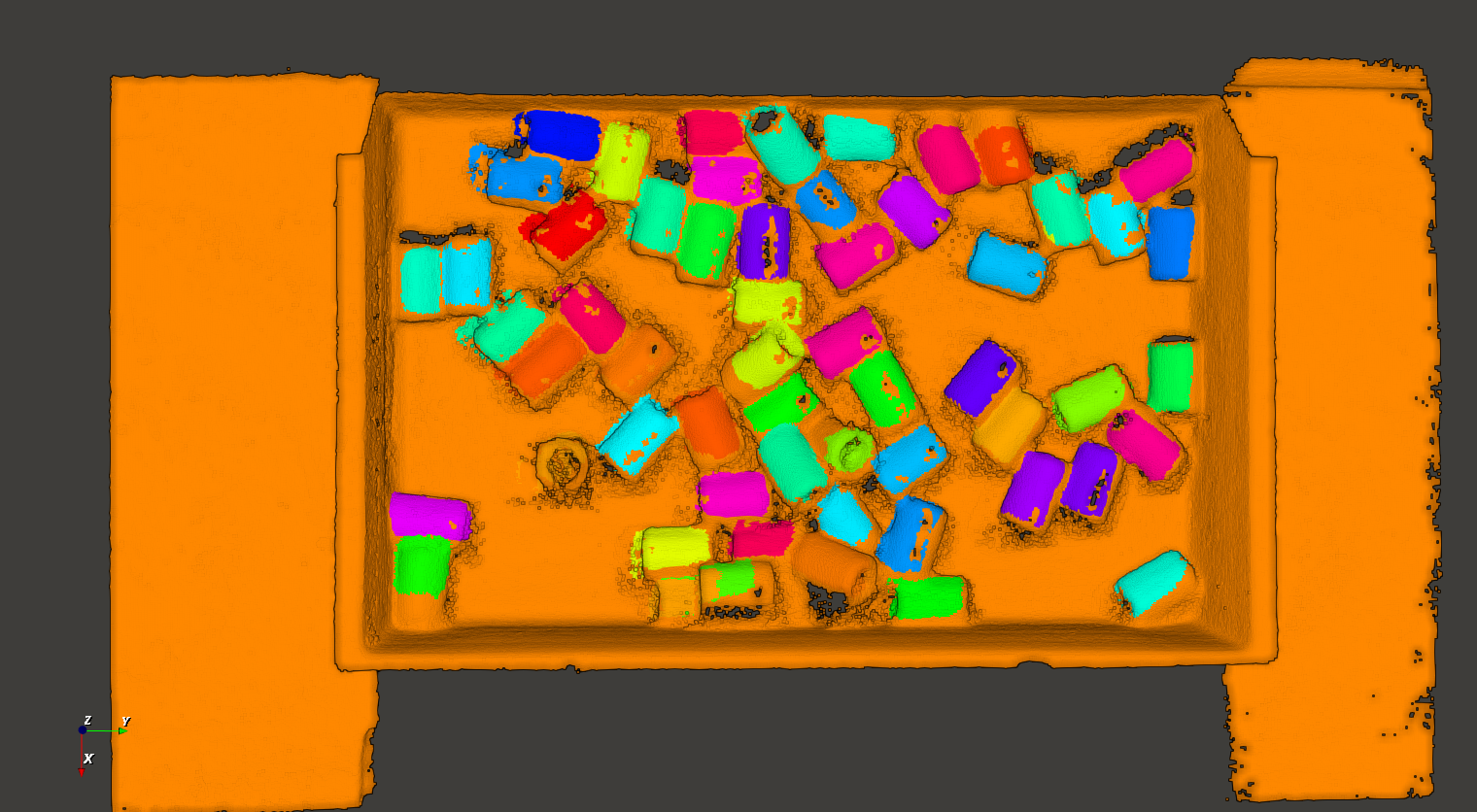

In dem aufgenommenen 2D Bild werden durch angelernte Methoden der Künstlichen Intelligenz die Objekte erkannt und das Bild vorsegmentiert. Die so bestimmten Positionen werden dann benutzt um die Erkennung der Objekte in den 3D Tiefenbildern zu initialisieren. Die genaue Lokalisierung der Objekte findet durch den Vergleich der 3D Aufnahme an diesen Positionen mit einem Modell der Objekte statt. Dieser Vergleich wird iterativ wiederholt, dadurch kann die erkannte Position des Objektes kontinuierlich verbessert werden. Die durch KI-Methoden bestimmten initialen Startpositionen beschleunigen hier diese Objekterkennung und können zu qualitativ besseren Ergebnissen führen.

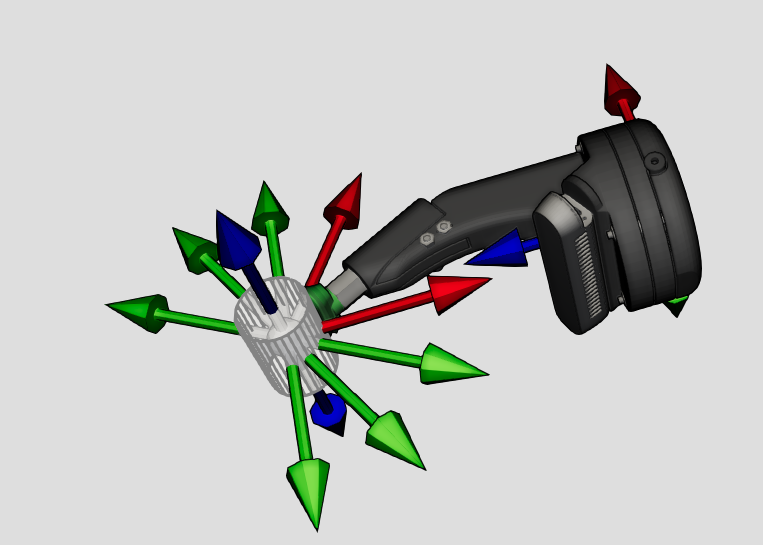

Bewegungs- und Greifplanung

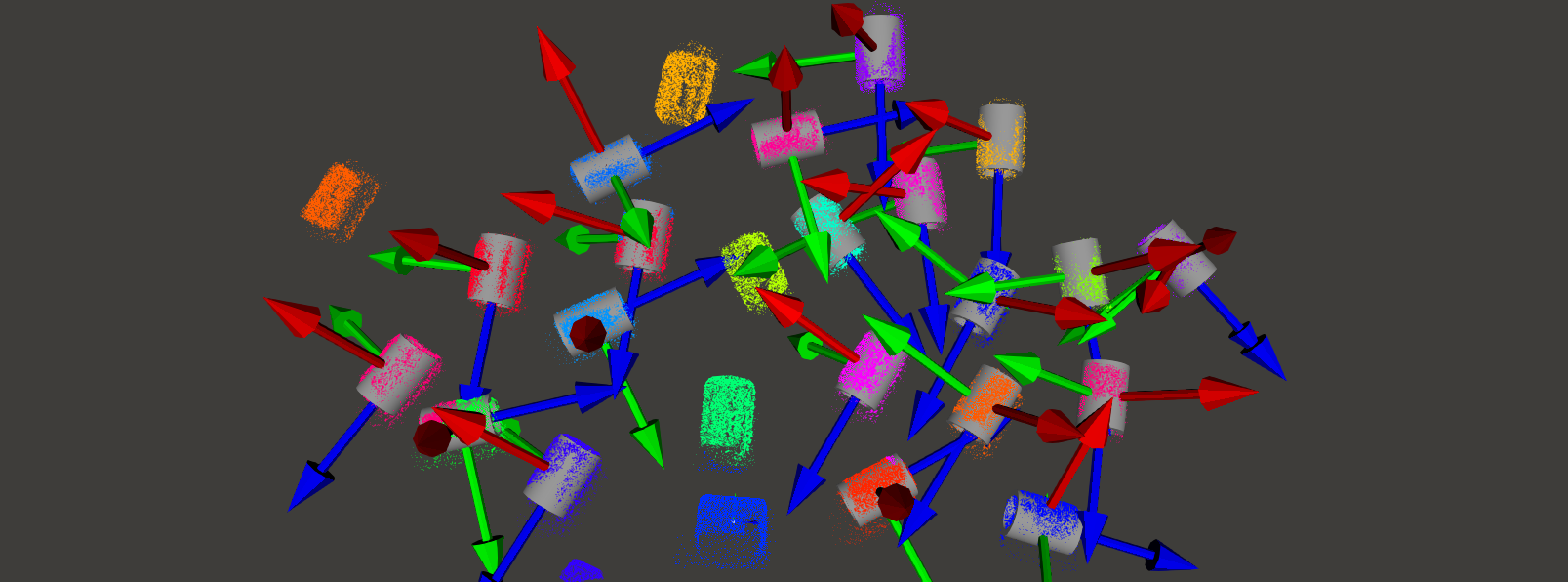

Mit den jetzt bekannten Objektlagen und der Greifpunkte kann eine Greifbewegung geplant werden. Durch das verwendete Werkzeug und die Umgebungssituation der Objekte ergeben sich Armpositionen, aus denen das Objekt sicher aufgenommen werden kann und die kollisionsfrei erreichbar sind. Einfach zu erreichende Objekte werden zuerst gegriffen. Objekte, die Anfangs noch von anderen Objekten teilweise verdeckt sind, werden so mit der Zeit freigelegt und immer besser erreichbar.

Die Roboterarmbewegungen werden mit Hilfe der durch die Sensorik erstellten Umgebungsinformationen im Voraus auf mögliche Kollisionen überprüft. Durch die Verwendung von Vollvolumenmodellen des Roboters und der Hindernisse kann eine sichere und kollisionsfreie Roboterbewegung geplant werden.

Die Trajektorienplanung findet komplett in DRS statt und verwendet eigene Kinematikmodelle der Roboter, somit kann unabhängig von Robotertypen und ihrer konkreten Planungsfähigkeiten eine Bewegung für Roboterarme aller Hersteller geplant werden.

Ausführung auf dem Roboter

Die individuell berechnete Armbewegung wird vom System zur Ausführung direkt an den Roboterarm übertragen. Dabei wird die geplante Roboterbewegung stückweise an den echten Roboterarm gesendet und überwacht ausgeführt. Unterschiede in den Fähigkeiten von Armen verschiedener Hersteller werden ausgeglichen, der Endnutzer muss keine herstellerspezifischen Programme erstellen.

Nach dem Aufnehmen eines Objektes aus der chaotischen Kiste kann es gezielt in jetzt genau bekannter Lage z.B. Montage- oder Bearbeitungsprozessen zugeführt werden oder auch geordnet wieder abgelegt werden.